AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

Back to Blog

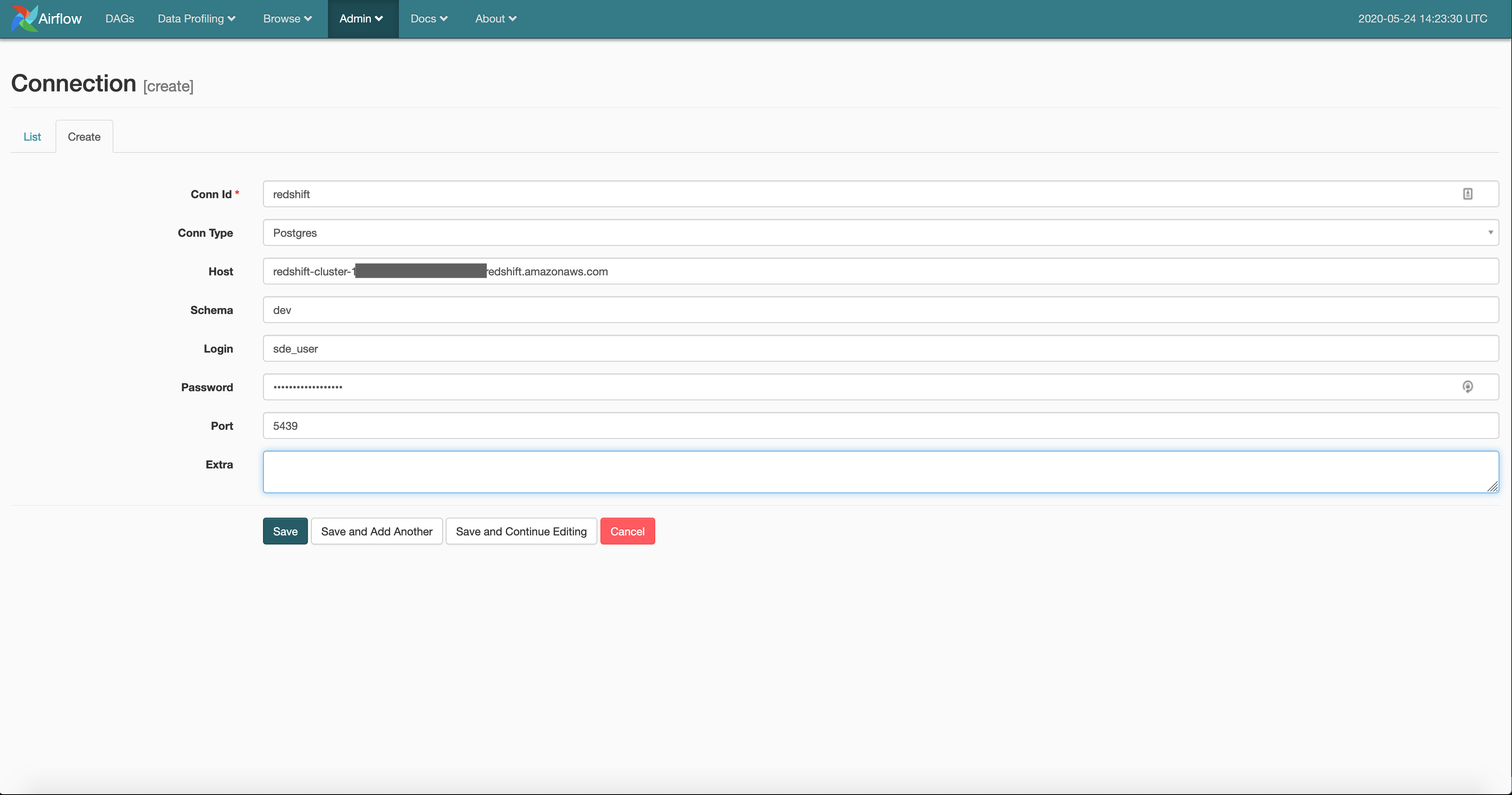

Airflow dag config8/12/2023 get ( 'core', 'log_format' ) BASE_LOG_FOLDER = conf. Import os from airflow import configuration as conf S3_LOG_FOLDER = 's3:///airflow-logs/' LOG_LEVEL = conf. In this blog post, I'm going to walk through six features that should prove helpful in customising your Airflow installations. I've already covered performing a basic Airflow installation as a part of a Hadoop cluster and put together a walk-through on creating a Foreign Exchange Rate Airflow job. The project has a strong enough developer community around it that the original author, Maxime Beauchemin, has been able to step back from making major code contributions over the past two years without a significant impact on development velocity. These engineers include staff from data-centric firms such as AirBNB, Bloomberg, Japan's NTT (the World's 4th largest telecoms firm) and Lyft. As of this writing, 486 engineers from around the world have contributed code to the project. I believe these are the key reasons for Airflow's popularity and dominance in the orchestration space.Īirflow has a healthy developer community behind it. Jobs are written in Python and are easy for newcomers to put together. Installation of Airflow is concise and mirrors the process of most Python-based web applications. But Airflow was unique in that it's a reasonably small Python application with a great-looking Web UI. When Airflow began its life in 2014 there were already a number of other tools which provided functionality that overlaps with Airflow's offerings. This offering uses Kubernetes to execute jobs in their own, isolated Compute Engine instances and resulting logs are stored on their Cloud Storage service. Even Google has taken notice, they've recently announced a managed Airflow service on their Google Cloud Platform called Composer. Airflow's README file lists over 170 firms that have deployed Airflow in their enterprise. Over the past 18 months, nearly every platform engineering job specification I've come across has mentioned the need for Airflow expertise. This process helps investigate failures much quicker than having to search endlessly through lengthy, aggregated log files. Airflow will isolate the logs created during each task and presents them when the status box for the respective task is clicked on. Tasks can be any sort of action such as downloading a file, converting an Excel file to CSV or launching a Spark job on a Hadoop cluster. Jobs, known as DAGs, have one or more tasks.

It helps run periodic jobs that are written in Python, monitor their progress and outcome, retry failed jobs and convey events in a colourful and concise Web UI. Apache Airflow is a data pipeline orchestration tool.

0 Comments

Read More

Leave a Reply. |

RSS Feed

RSS Feed